Docker for bioinformatics: reproducibility at the core

Reproducibility in science is key. How can containerisation tools such as Docker help bioinformatics and bioinformaticians?

Science has a reproducibility crisis, which is as true for computer based methods as it is for wet lab experiments. How can containerisation tools such as Docker help bioinformaticians to ensure that data-driven approaches are reproducible?

Alice Minotto, who manages CyVerse UK in the Rob Davey Group at EI, recently spoke at dockercon19 in San Francisco, where she wowed the audience with a fascinating journey through science history and ancient mythology, to explain why tools such as docker are invaluable for bioinformaticians, and ensuring that data-driven science is reproducible.

Famous for his astronomical discoveries and his troubles with the church, Galileo is often considered the father of modern science. He introduced his scientific method: a three step process.

This all might seem quite obvious to us today, but it wasn’t at the time - but Alice points out that he was still missing something very important. This missing aspect is one of the main dogmas of modern science, introduced by Boyle: reproducibility.

Boyle was famous for his law on gases, which we all probably studied at school and then forgot again soon afterwards. During his life as a scientist, he found himself in a predicament when trying to build an air pump, which was something a colleague of his had done in the Netherlands in order to carry out an experiment, but he wasn’t able to do this.

Indeed, he was forced to write him a letter, saying I can’t do this and nobody else around me seems to be able to do this, so if no-one can do this, no-one is going to believe your findings over here in England. The story had a happy ending - as his colleague went over to England, helped build the air pump, and there they were able to reproduce his results.

The point is - if you do an experiment but produce the results only once, there’s a high likelihood that it’s down to chance. If you want to be more sure that a phenomenon is real, and not down to chance, then it must be reproduced again and again and again.

We may say that a phenomenon is experimentally demonstrable when we know how to conduct an experiment which will rarely fail to give us statistically significant results.

- R. Fisher

Today, this idea of reproducibility is firmly lodged in the mindset of budding scientists from the outset.

Alice’s partner (me) while at University studying Plant Sciences in Manchester ate some Kiwi, after which his throat got incredibly badly inflamed and swollen so much so that he couldn’t swallow or breathe - managed to take a load of antihistamines - and thankfully survived.

Of course, all of his classmates said, “are you sure it was the kiwi? We think you should try again to make sure it was the kiwi.”

Happily he’s alive and didn’t do that - he’s deadly allergic to kiwi and should really carry his epipen everywhere he goes - but you have to admire the scientific mindset.

(This cartoon is not far from reality, when it comes to kiwi allergies and science students…)

Yet, despite this mindset, we find ourselves in quite a bad situation nowadays: a reproducibility crisis.

The term stems from psychology, but the crisis spans many fields of science and the life sciences are highly affected. The numbers vary, but as few as 11-25% of cancer research studies are reproducible.

This was raised as an issue equally by pharmaceutical companies as well as academics, because of course they take information from university studies to help inform new drug discovery - and a lot of these studies don’t get to the preclinical or clinical stage because the initial findings cannot be reproduced at all.

This is not just a problem in science, this a problem for all of us. In 2015, the USA spent $18 billion on non-reproducible medical research, which is a huge waste.

If you ask scientists why this is happening, they will give you different reasons. The most important of these, as is often the case, are time and money - wet lab experiments can be very expensive and are often incredibly time consuming.

Meanwhile, there is career progression of the scientists involved that we have to keep in mind. You just don’t get the same recognition if you do something that has already been done compared to if you do something new and previously unpublished.

But replicating science is hugely important - and it’s good if we find errors and then change our judgement, this is all part of how science should work.

Take the instance in which a science paper seemed to have discovered that DNA could be made up of arsenic rather than phosphorus, based on life found in a caustic lake. This was so groundbreaking that people actually tried to reproduce the results, and fortunately it was found that actually this was not the case and the initial results were perhaps misleading. It wasn’t the end of the world (but perhaps the end for the idea of arsenic life).

This was all in good faith, and this sort of thing happens quite often in science.

But replicating science is hugely important - and it’s good if we find errors and then change our judgement, this is all part of how science should work.

The problem with not reproducing research in the first place is that we leave the door open for worse situations to occur. In the most heinous of cases - scientific misconduct and fraud, which have the power to endanger lives.

Perhaps the most infamous case of this was the supposed link identified between the MMR vaccine and autism and bowel disease.

The measles vaccine is quite old, originating in the seventies before being combined with those for mumps and rubella, and of course everything was fine - people stopped getting measles, mumps and rubella - which can be deadly, particularly if women are pregnant and pass them on congenitally.

Then in the 90s, a doctor - who is not a doctor anymore because he was rightfully struck off following this event - published a paper in the lancet finding this completely false link.

Now, this is far from harmless. There are real life consequences. The vaccination rate dropped significantly in many countries following the release of this paper and the subsequent article in the Daily Mail, which is understandable as many parents were scared. This is still the reason that many developed countries fall below the threshold for herd immunity - which protects the population as a whole from the spread of these diseases.

This former doctor and fraudster failed to disclaim that he had a significant conflict of interest when publishing his findings. He was being paid by lawyers acting in a court case against the manufacturers of the vaccine, while also patenting his own alternative version of the vaccine.

All the more reason, therefore, we should put increased effort into reproducibility.

Now, this is far from harmless. There are real life consequences. The vaccination rate dropped significantly in many countries following the release of this paper and the subsequent article in the Daily Mail, which is understandable as many parents were scared.

Nowadays a lot of biology still takes place in the lab, but increasingly we perform large scale experiments on computers.

You would be forgiven for thinking that once we involve computers in the analysis of data, we might do away with the problem of reproducibility because surely, obstacles such as money and time aren’t so relevant anymore.

This is partially true, but not often the case in real life. We have to consider who is writing the code, for example, and much of the programs written in the field of bioinformatics are perhaps not “business-ready”.

Common problems are that software is often hard to install (even for someone who knows how to do it) and often lacks proper documentation, but still needs to be used by people who are perhaps in a similar situation as the person who coded the software in the first instance.

This can take a lot of time and effort, and researchers can end up abandoning a piece of software altogether because there was no solution. This problem is exacerbated by the fact that people are paid on temporary contracts to write pieces of software that then cannot be supported because the person has then moved on to a new institute and a new project. Therefore, much computationally-driven life science research still can’t be reproduced.

A game changer has been the introduction of containers for packaging bioinformatics software.

Now, we can provide people with the necessary software in an executable file if needs be, so they can just click on it and everything works hunkydory. The usefulness of containers is so widely recognised by the community that ELIXIR - a Europe-wide bioinformatics network - recently had a course called “Reproducibility and docker”.

But between all of the possible containers, docker is getting a lot of attention because it is quite simple and easy to use.

Why is this important to us in a bioinformatics institute?

Most programs are not standalone as you may imagine, but they rely on other pieces of software previously written by someone else: a common piece of advice in IT is not to reinvent the wheel!

These programs or libraries are referred to as dependencies, and they need to be available for our main program to run. It can sometimes be very tedious and complicated to get hold of all of these, but that’s another story.

Containers are a way to package applications and all their dependencies and libraries together. This is somewhat similar to virtual machines, but more lightweight and immediate to use.

Let’s just imagine the piles of containers in a port: we can easily ship anything inside the containers, and they make it easier for us to do so providing an external common structure. In the same way we can package an application into a container and send it to a coworker, or run it in different computers, all without worrying any more about being in different environments.

A game changer has been the introduction of containers for packaging bioinformatics software.

Let’s get into some Greek Mythology for a second.

Take the story of Athena, the Greek Goddess of war and, among other things, weavers, and Arachne, the weaver. Arachne was such a good weaver that she bragged that she could have beaten Athena in a competition.

This really annoyed Athena - in line with the Envy of the Gods - the premise being that the human condition did not permit someone to be too good or lucky in anything, which ended up with Athena turning poor Arachne into a spider (which is, obviously, why spiders nowadays make cobwebs).

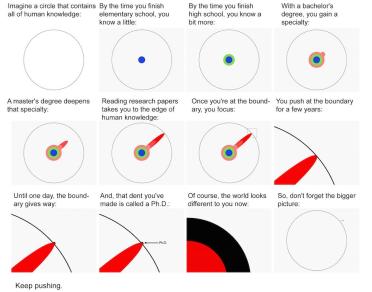

To put this into a modern perspective, let’s consider someone who is doing a PhD nowadays - and there’s a quite famous graph that represents this nicely thanks to Matt Might’s “The Illustrated Guide to a PhD”.

The white circle represents all of human knowledge, while the coloured sections show the various levels of knowledge obtained through education. So at first, you start learning a bit of everything, but then you get to a certain point - your bachelors, masters, PhD - and you then kind-of race off right to the edge of human knowledge, and maybe push it even a bit further.

The problem here is that, since you can’t really know everything, if you’re already there as an expert neuroscientist or evolutionary biologist, you can’t be expected to also be an expert computer developer or system administrator. It’s not reasonable, nowadays, to expect this.

That’s why it’s great that there are projects such as biocontainers that do the job for the scientist, these biocontainers have thousands of pieces of software ready for scientists to use.

Then there are projects, such as CyVerse UK, that use containers as part of a stack that improve reproducibility of research - making software available and usable to scientists across the world.

That’s why it’s great that there are projects such as biocontainers that do the job for the scientist, these biocontainers have thousands of pieces of software ready for scientists to use.

Alice points out that she knows it’s so easy to use because first of all, she did it herself just a few years ago, and then because last year she travelled with her group to Kenya as part of a course on bioinformatics where she led some training for a week.

All of the attendees were biologists, biotechnologists and agronomists with mostly little to no background in tech. The course was intended to explain incredibly basic system administration, such as virtual machines, ssh keys and so forth.

After two days came training in docker, and the hardest concept to grasp for the group was the difference between an image and a container. After this initial explanation, everything was fine and the trainees were able to make their own images, to use and share them, and we were left after one week with a quote from one of the guys who said:

He really appreciated the potential that docker would bring to his own research group.

Thanks to platforms such as docker, which allow us to distribute bioinformatics packages in an easy, usable manner to people who aren’t necessarily experts in computer programming and software development, we are increasingly able to help biologists and bioinformaticians perform modern, data-driven science with the reproducibility that we all desire.